AI Usage Costs Are Rising. Here's Why Architecture Matters More Than Prompts.

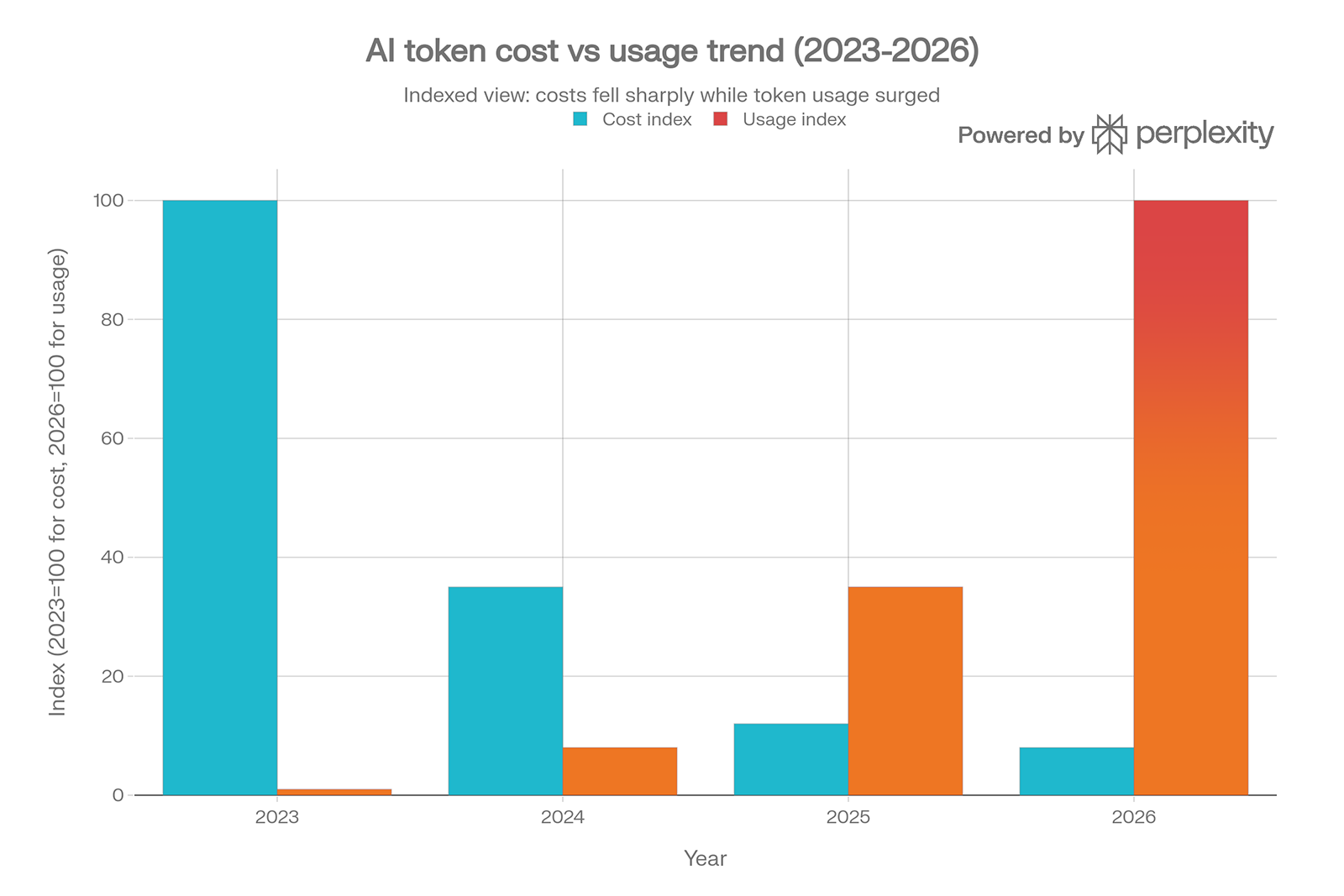

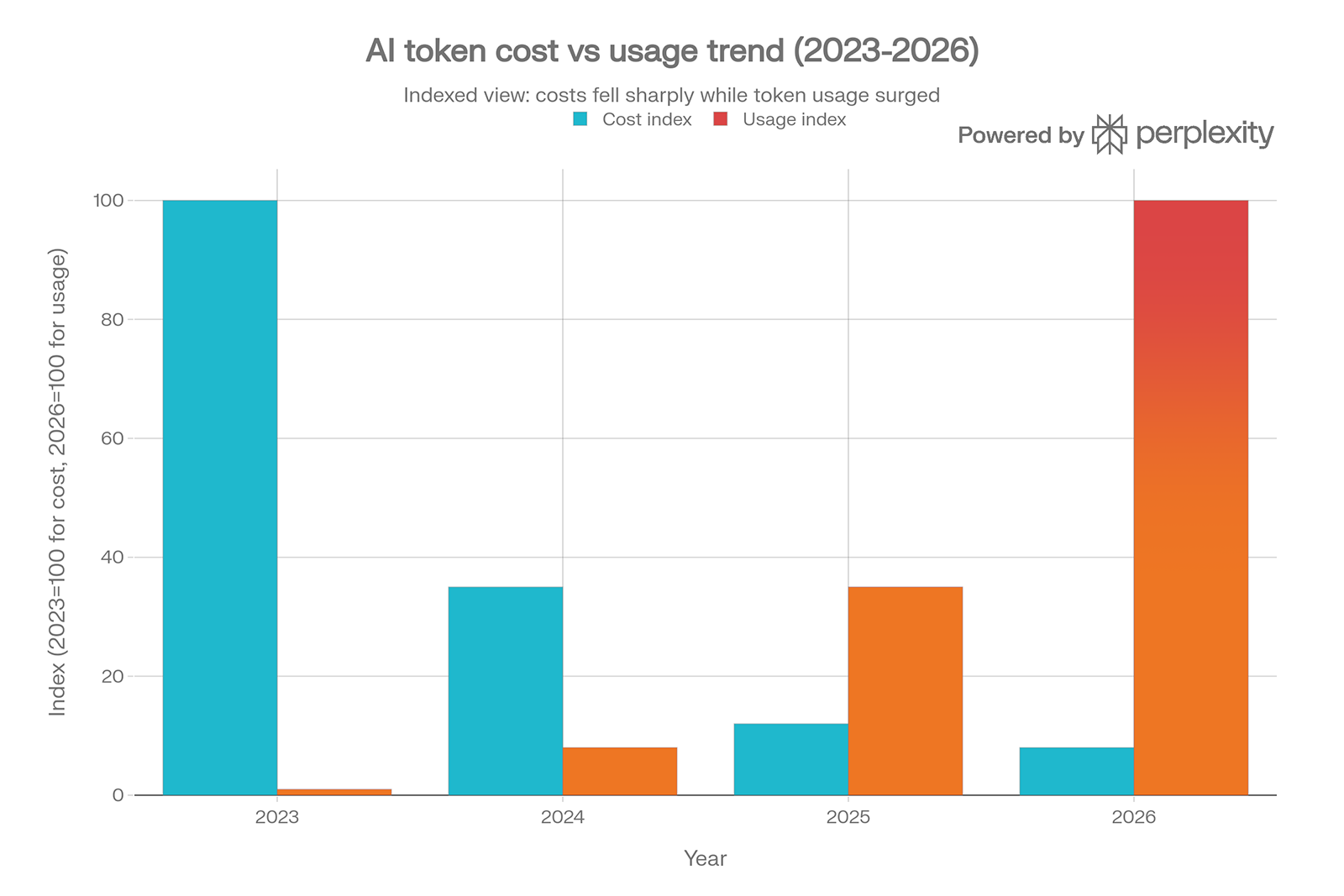

The price of running AI has dropped dramatically. OpenAI's API costs fell over 90% between 2024 and 2026. Anthropic and Google matched the cuts.

On paper, AI has never been cheaper to use.

Enterprise AI budgets are rising anyway. The FinOps Foundation's 2026 State of FinOps Report found that 73% of organizations reported AI costs exceeded their original budget projections.

Average enterprise AI spend has grown from $1.2 million annually in 2024 to $7 million in 2026. Some Fortune 500 companies are carrying monthly inference bills in the tens of millions.

The paradox is not a mystery once you understand what changed. The cost per token fell. The number of tokens consumed exploded.

And the reason for that explosion is almost never about prompts.

What Is Actually Driving the Bill

When organizations moved from experimental chatbots to agentic workflows, the cost model changed in ways most teams did not anticipate. A simple query might consume a few hundred tokens.

An agentic workflow handling the same task can trigger dozens of model calls: planning steps, memory lookups, retrieval queries, validation passes, and rewrites when an output seems off.

Each step is another line on the API bill.

One customer support agent handling a single issue might touch the model twelve times before producing a response.

At chatbot-level cost assumptions, the economics looked manageable. At agentic scale across thousands of daily interactions, the same assumptions become a budget problem nobody modeled.

The FinOps Foundation put it plainly: enterprises that piloted AI with single-query chatbots and then deployed multi-step agentic workflows at scale experienced cost multiplications they had not anticipated.

The ROI calculations that justified the deployment were built on the wrong numbers.

Why Prompts Are Not the Answer

The instinct when bills come in high is to optimize prompts. Write tighter instructions. Reduce the context window.

Tune the wording until outputs improve.

Prompt optimization is real and worth doing. It is also a tactical response to a structural problem.

A well-crafted prompt makes a single interaction more efficient. It does not determine whether you are using a $15-per-million-token frontier model for a task a $0.20 model could handle. It does not prevent the same question from being sent to the API seventeen times across different teams with no shared caching layer.

It does not stop an agentic workflow from making redundant calls because the system was not designed with clear handoff points.

It does not address the fact that your organization has accumulated API keys across a dozen tools, each running independently, with no unified view of what is being consumed or why.

Those are architecture problems. Prompts cannot fix them.

Where Architectural Decisions Determine Cost

The organizations managing AI spend effectively in 2026 are not doing it through better prompting.

They are making deliberate decisions about how their systems are built.

Model selection by task is one of the highest-leverage decisions available. Research on agentic architectures shows a Plan-and-Execute pattern where a capable frontier model creates a strategy and cheaper, faster models carry out the execution can reduce costs by up to 90% compared to routing everything through the same model. Most organizations are not doing this because nobody owns the decision.

Caching is another. One case study found that processing 50,000 documents per month cost $8,000 with caching versus $45,000 without it, a fivefold difference on the same workload. Semantic caching, which stores and reuses similar responses rather than only identical ones, can reduce API calls by 30 to 50% for repetitive business processes. This is not a prompt engineering problem. It is a system design decision.

API sprawl compounds both. When individual teams are running their own integrations against their own API keys with no shared governance layer, there is no visibility into duplicate spend, no ability to enforce model selection policies, and no mechanism for identifying workflows that have drifted into inefficiency. The FinOps Foundation noted that organizations that cannot attribute AI costs to specific teams and outcomes cannot govern them. Attribution is an architecture problem.

We believe that business is built on transparency and trust, and that good software is built the same way.

That applies directly here. A system you cannot see is a system you cannot trust, and a system you cannot trust will cost more than it should.

What This Looks Like When It Goes Wrong

Gartner predicts that 40% of agentic AI deployments will be canceled by 2027 due to rising costs, unclear value, or poor risk controls.

The failure mode being described is not that the model performed badly. It is that the system around the model was not designed to be governed.

The pattern is consistent across the organizations hitting this wall. AI was introduced as a capability, not designed as a system.

Tools were added to support immediate needs. Each team built their own integrations. Nobody drew the architecture first. The result is a diffuse, ungoverned set of AI touchpoints that produce real costs with no clear ownership and no reliable way to measure whether the outcomes justify the spend.

This is the same structural problem that shows up in data systems, in software implementations, in every situation where capability accumulates faster than design.

The Questions Worth Asking Now

If your AI spend is growing and you cannot clearly explain what is driving it, these questions will surface where the architecture work needs to happen.

Do you know which model is being used for which tasks, and why? If the answer is that whatever the tool defaults to, there is a governance gap that is almost certainly costing money.

Is your organization caching outputs that do not need to be regenerated? Repeated queries against the same underlying data without caching are paying full price for information the system already produced.

How many separate API integrations are running across your tools and teams? Each one is a cost center with no visibility into the others. Consolidation is not just a cost reduction. It is how you get a picture of what your AI investment is actually doing.

Are your agentic workflows designed with clear task boundaries and handoff points? An agent that re-queries the model because the workflow did not define where one step ends and the next begins is burning tokens on coordination overhead, not business value.

Can you connect AI spend to business outcomes? Token consumption is a cost metric. It is not a value metric. Organizations that cannot tie inference spend to resolved tickets, closed deals, or reduced processing time are not managing AI costs. They are managing a growing line item.

The era of subsidized AI pricing is ending. OpenAI's head of ChatGPT described their current pricing model as accidental and signaled that it would significantly evolve.

The organizations that have built AI into their systems deliberately, with clear architecture and real cost governance, will absorb that shift without disruption.

The ones that treated AI as a capability to add rather than a system to design will feel it.

AI Usage Costs Are Rising. Here's Why Architecture Matters More Than Prompts.

The price of running AI has dropped dramatically. OpenAI's API costs fell over 90% between 2024 and 2026. Anthropic and Google matched the cuts.

On paper, AI has never been cheaper to use.

Enterprise AI budgets are rising anyway. The FinOps Foundation's 2026 State of FinOps Report found that 73% of organizations reported AI costs exceeded their original budget projections.

Average enterprise AI spend has grown from $1.2 million annually in 2024 to $7 million in 2026. Some Fortune 500 companies are carrying monthly inference bills in the tens of millions.

The paradox is not a mystery once you understand what changed. The cost per token fell. The number of tokens consumed exploded.

And the reason for that explosion is almost never about prompts.

What Is Actually Driving the Bill

When organizations moved from experimental chatbots to agentic workflows, the cost model changed in ways most teams did not anticipate. A simple query might consume a few hundred tokens.

An agentic workflow handling the same task can trigger dozens of model calls: planning steps, memory lookups, retrieval queries, validation passes, and rewrites when an output seems off.

Each step is another line on the API bill.

One customer support agent handling a single issue might touch the model twelve times before producing a response.

At chatbot-level cost assumptions, the economics looked manageable. At agentic scale across thousands of daily interactions, the same assumptions become a budget problem nobody modeled.

The FinOps Foundation put it plainly: enterprises that piloted AI with single-query chatbots and then deployed multi-step agentic workflows at scale experienced cost multiplications they had not anticipated.

The ROI calculations that justified the deployment were built on the wrong numbers.

Why Prompts Are Not the Answer

The instinct when bills come in high is to optimize prompts. Write tighter instructions. Reduce the context window.

Tune the wording until outputs improve.

Prompt optimization is real and worth doing. It is also a tactical response to a structural problem.

A well-crafted prompt makes a single interaction more efficient. It does not determine whether you are using a $15-per-million-token frontier model for a task a $0.20 model could handle. It does not prevent the same question from being sent to the API seventeen times across different teams with no shared caching layer.

It does not stop an agentic workflow from making redundant calls because the system was not designed with clear handoff points.

It does not address the fact that your organization has accumulated API keys across a dozen tools, each running independently, with no unified view of what is being consumed or why.

Those are architecture problems. Prompts cannot fix them.

Where Architectural Decisions Determine Cost

The organizations managing AI spend effectively in 2026 are not doing it through better prompting.

They are making deliberate decisions about how their systems are built.

Model selection by task is one of the highest-leverage decisions available. Research on agentic architectures shows a Plan-and-Execute pattern where a capable frontier model creates a strategy and cheaper, faster models carry out the execution can reduce costs by up to 90% compared to routing everything through the same model. Most organizations are not doing this because nobody owns the decision.

Caching is another. One case study found that processing 50,000 documents per month cost $8,000 with caching versus $45,000 without it, a fivefold difference on the same workload. Semantic caching, which stores and reuses similar responses rather than only identical ones, can reduce API calls by 30 to 50% for repetitive business processes. This is not a prompt engineering problem. It is a system design decision.

API sprawl compounds both. When individual teams are running their own integrations against their own API keys with no shared governance layer, there is no visibility into duplicate spend, no ability to enforce model selection policies, and no mechanism for identifying workflows that have drifted into inefficiency. The FinOps Foundation noted that organizations that cannot attribute AI costs to specific teams and outcomes cannot govern them. Attribution is an architecture problem.

We believe that business is built on transparency and trust, and that good software is built the same way.

That applies directly here. A system you cannot see is a system you cannot trust, and a system you cannot trust will cost more than it should.

What This Looks Like When It Goes Wrong

Gartner predicts that 40% of agentic AI deployments will be canceled by 2027 due to rising costs, unclear value, or poor risk controls.

The failure mode being described is not that the model performed badly. It is that the system around the model was not designed to be governed.

The pattern is consistent across the organizations hitting this wall. AI was introduced as a capability, not designed as a system.

Tools were added to support immediate needs. Each team built their own integrations. Nobody drew the architecture first. The result is a diffuse, ungoverned set of AI touchpoints that produce real costs with no clear ownership and no reliable way to measure whether the outcomes justify the spend.

This is the same structural problem that shows up in data systems, in software implementations, in every situation where capability accumulates faster than design.

The Questions Worth Asking Now

If your AI spend is growing and you cannot clearly explain what is driving it, these questions will surface where the architecture work needs to happen.

Do you know which model is being used for which tasks, and why? If the answer is that whatever the tool defaults to, there is a governance gap that is almost certainly costing money.

Is your organization caching outputs that do not need to be regenerated? Repeated queries against the same underlying data without caching are paying full price for information the system already produced.

How many separate API integrations are running across your tools and teams? Each one is a cost center with no visibility into the others. Consolidation is not just a cost reduction. It is how you get a picture of what your AI investment is actually doing.

Are your agentic workflows designed with clear task boundaries and handoff points? An agent that re-queries the model because the workflow did not define where one step ends and the next begins is burning tokens on coordination overhead, not business value.

Can you connect AI spend to business outcomes? Token consumption is a cost metric. It is not a value metric. Organizations that cannot tie inference spend to resolved tickets, closed deals, or reduced processing time are not managing AI costs. They are managing a growing line item.

The era of subsidized AI pricing is ending. OpenAI's head of ChatGPT described their current pricing model as accidental and signaled that it would significantly evolve.

The organizations that have built AI into their systems deliberately, with clear architecture and real cost governance, will absorb that shift without disruption.

The ones that treated AI as a capability to add rather than a system to design will feel it.