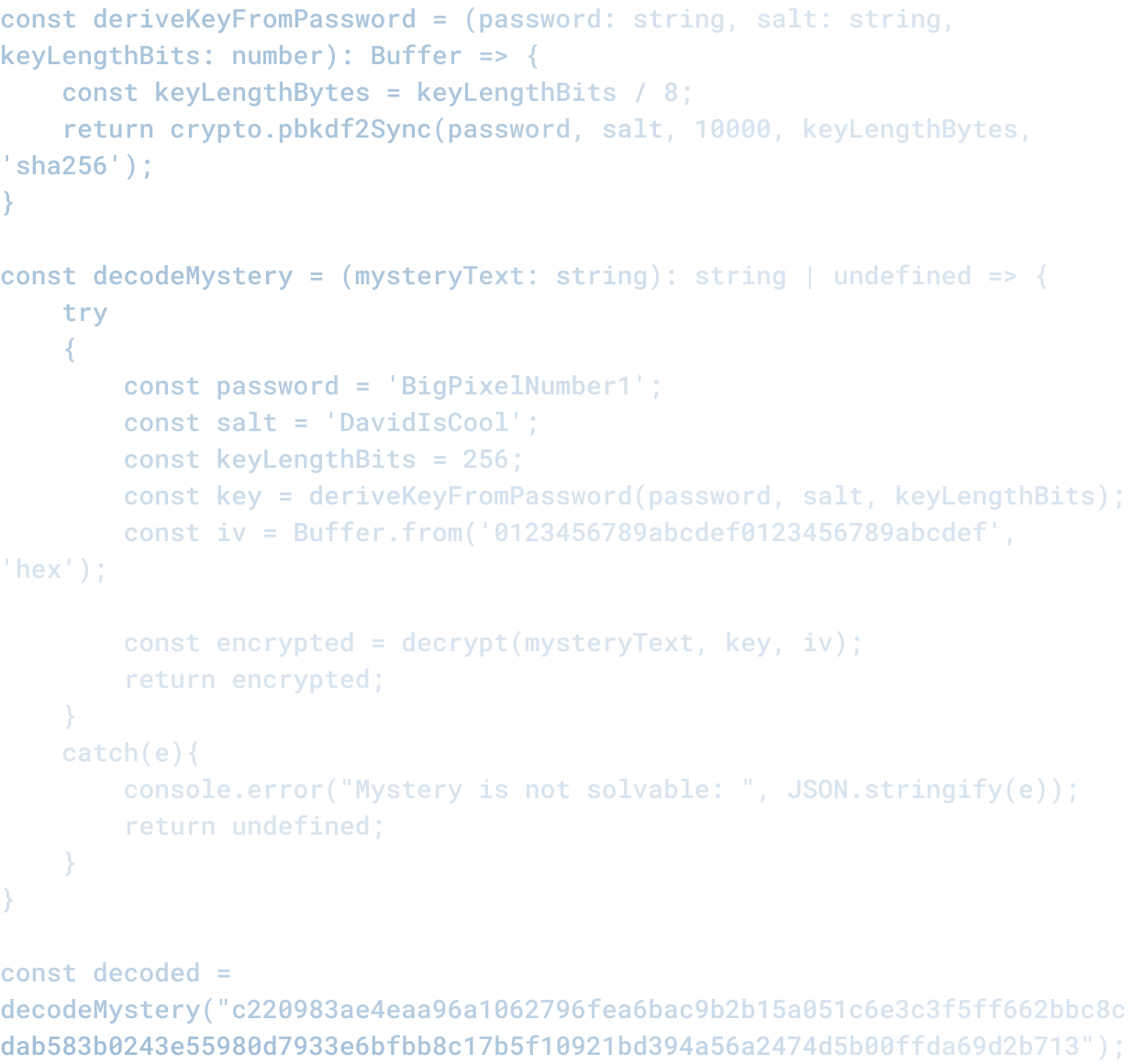

How Do You Measure AI ROI? Why Most AI Rollouts Fail to Show Real Business Impact

How Do You Measure AI ROI? Why Most AI Rollouts Fail to Show Real Business Impact

Most teams implementing AI do not measure whether it actually improves business outcomes.

This is a problem. Not because measurement is inherently important, but because measurement is how you know whether something is working.

Many organizations have implemented generative AI tools over the past 18 months. They have spent significant money and time. They have asked their teams to integrate new tools into their workflows. And most have not formally answered whether any of it improved business results.

The Default Is Not Measurement

Most AI implementations follow a predictable pattern:

1. Leadership hears that competitors are using AI and becomes concerned about falling behind.

2. A team or department experiments with a tool (ChatGPT, Claude, etc.)

3. The tool seems useful in isolated use cases. A marketer writes faster. A developer gets autocomplete. Someone drafts an email quicker.

4. Leadership decides to roll out the tool more broadly.

5. The organization integrates the tool into workflows and expects it to improve productivity.

6. Everyone moves on to the next thing.

At no point does anyone formally measure whether productivity actually improved, whether the quality of work changed, or whether the business is actually better off.

The rollout happens, resources get allocated, and then the measurement question gets deferred. Indefinitely.

Why Measurement Gets Skipped

Most organizations skip measurement for a few reasons:

It is complicated. Productivity is hard to measure. You can count the words someone wrote or the code they checked in, but that does not tell you whether the work is better, faster, or more valuable. You need leading and lagging indicators, control groups, and time to see results.

It is not obvious what to measure. Should you measure time per task? Quality of output? Customer satisfaction? Revenue impact? Most organizations pick the easiest metric (like adoption rate) rather than the one that actually matters.

It shows the tool might not be working. This is the unstated concern. If you measure productivity and the tool does not improve it, you have to admit the rollout did not work. It is easier to assume it is working and move on.

Results take time. The benefits of AI integration are not immediate. They compound over time as workflows change and teams learn how to use the tools. Most organizations are looking for quarterly results, not multi-year improvements.

What Measurement Should Actually Look Like

If you are implementing AI, here is what measurement should include:

Baseline metrics. Before implementing the tool, measure the current state. How long does it take to complete the work? What does quality look like? What are teams complaining about? You cannot measure improvement without a baseline.

Leading indicators. What do you expect to change first? If AI makes coding faster, time-per-task should decrease. If it improves quality, code review comments or bug count should shift. Leading indicators show whether the change is happening.

Lagging indicators. What is the business outcome you actually care about? Revenue, customer satisfaction, employee retention, time-to-market, cost per unit, or something else. These are harder to move but they are what actually matters.

Cohort analysis. Compare teams using the tool to similar teams not using it. Control groups are the only way to isolate the impact of the tool from other factors.

Qualitative feedback. Ask teams what changed. What got easier? What is harder? What did they stop doing? What unexpected impacts did they notice? Numbers tell you what changed. Conversations tell you why.

Most Results Are Mixed

When organizations actually measure, the results are usually mixed. The tool improves some things and makes other things worse. A developer might code faster but spend more time reviewing AI-generated code for bugs. A marketer might write more drafts but spend more time editing them. A salesperson might respond to emails faster but the emails sound generic.

These mixed results are not failures. They are data. They tell you:

- Where the tool is actually valuable

- Where it is not worth the effort

- How to change your workflows to make it work better

- Whether the overall impact is positive

Without measurement, you cannot answer any of those questions. You just assume it is working because it seems useful.

The Cost of Skipping Measurement

The cost of not measuring is significant:

You might keep using a tool that is not helping. You continue to invest time and money in something with no ROI.

You might miss the impact on other parts of the business. A tool that makes one team faster might make another team slower (by creating review overhead, or by changing communication patterns). Without measurement, you do not see the trade-offs.

You might not learn how to use it effectively. If you do not measure and adjust, you stay in the "this seems useful" phase and never get to the "this is a core part of how we work" phase.

You might lose credibility with leadership. If you implement AI without measurement, and leadership asks "did it work," you cannot answer.

How to Start

Start small. Pick one team or one workflow. Measure it carefully. Then use what you learn to rollout more broadly.

Here is a simple framework:

1. Define the baseline. How long does this work take now? What does quality look like? What are the pain points?

2. Make one change (introduce AI). Give it 4-6 weeks for the team to learn the tool and adjust their workflow.

3. Measure the leading indicators. Did time-per-task improve? Did quality metrics change? What did the team notice?

4. Measure the lagging indicators. What is the business impact? Did revenue go up, costs go down, customer satisfaction improve?

5. Ask the team. What changed? What works? What does not?

6. Decide. Did it work? If yes, how do you scale? If no, what would need to change?

The pattern of measure -> adjust -> measure again -> adjust again is how you actually get value from AI.

The Real Metric

The metric that matters is simple: Are you better off?

That is it. Not "are you using AI?" Not "have you implemented the tool?" But "is your business better as a result of using this tool?"

Most organizations have not answered that question. And that is the biggest risk of AI implementation. Not that the technology fails. But that you spend months and money implementing something and never actually know whether it worked.

Related Articles

Here are a couple related articles to view, or return back to the main page.

Check out the BIZ/DEV podcast

Our weekly tech podcast focusing on AI, our industry, the founder's journey, and more.