The 2026 Shift From AI Adoption to AI Accountability

The 2026 Shift: From AI Adoption to AI Accountability

For the last 18 months, the conversation about AI in business has been about adoption. Which tools should we use? How do we get people to use them? Where should we implement them?

That conversation is starting to shift.

As AI becomes integrated into more systems and more decisions, the conversation is moving from "should we adopt this" to "how do we ensure this is working properly."

That shift is consequential. Not because adoption stops mattering, but because accountability requires different capabilities and different conversations.

What Adoption Focused On

The adoption phase was about building comfort and capability.

Teams learned the tools. They figured out which use cases actually had a return on investment. They worked through the political questions (is this automating away our jobs?) and the technical questions (does it actually work in our environment?).

The focus was external: "how do we get this technology into our systems?"

This was the right focus. You cannot manage something you have not adopted. You cannot measure something you have not built into your workflows.

But adoption is only the first phase.

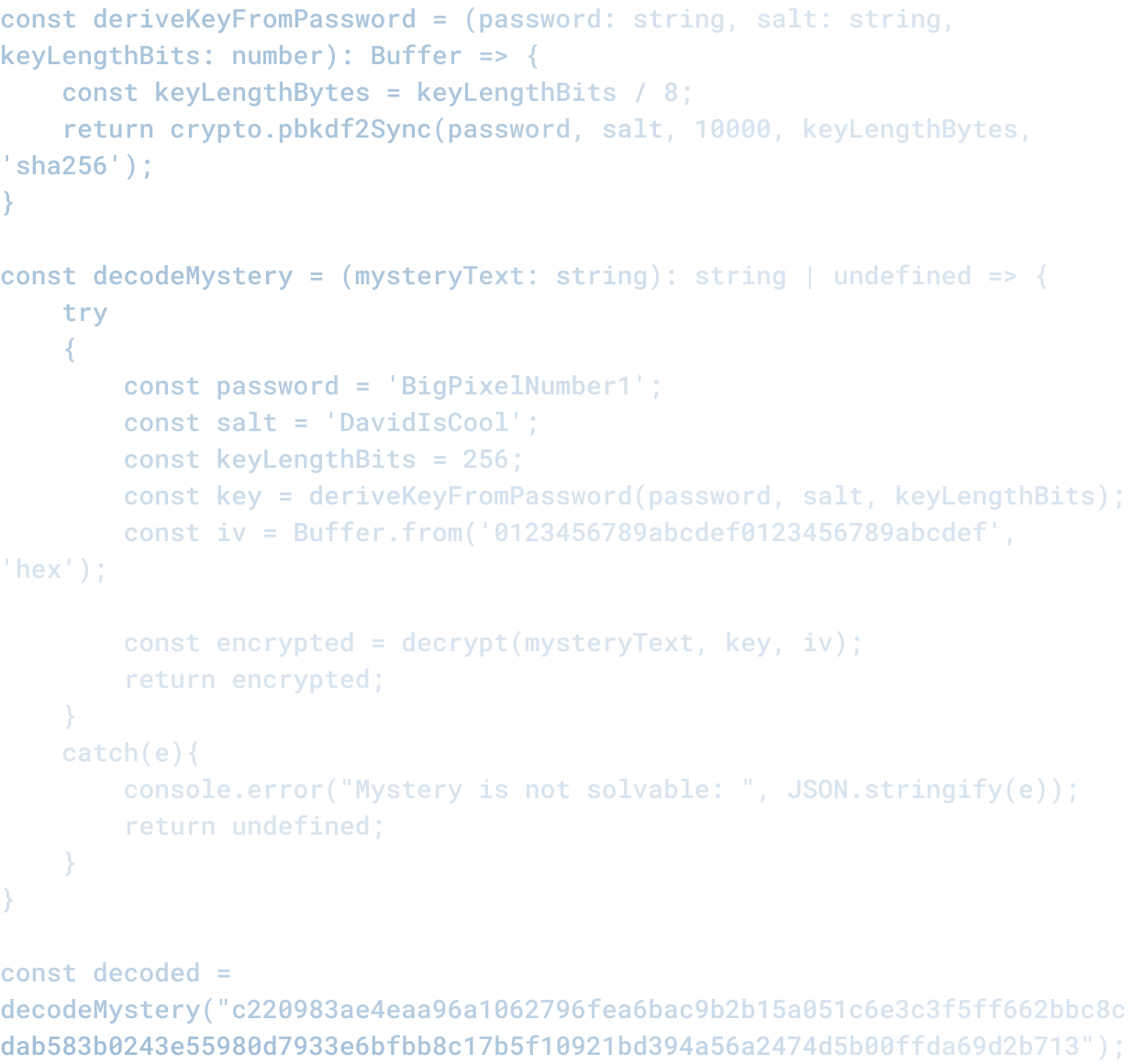

What Accountability Requires

As systems using AI move from "pilot" to "in production, directing business decisions," the conversation needs to shift.

Accountability means:

You can explain why the system made a particular decision. For high-stakes decisions (hiring, lending, content moderation), you need to be able to show how the model arrived at a conclusion, not just that it did.

You have a clear record of what happened. Not just "an AI system decided this," but which system, which version, what inputs, and what outputs. For compliance and for learning, you need that traceability.

You know when the system is failing. A model that works great in testing sometimes produces different results in production. You need monitoring and alerting that tells you when performance drops, when outputs drift, and when the system is encountering inputs it was not trained on.

You have a process for what to do about it. What is the escalation path when the system makes a mistake? Who decides what happens next? How do you adjust?

You can defend the decision to use the system at all. If something goes wrong and someone asks "why did you trust this system with that decision," you need a clear answer. And your answer needs to be based on data, not hope.

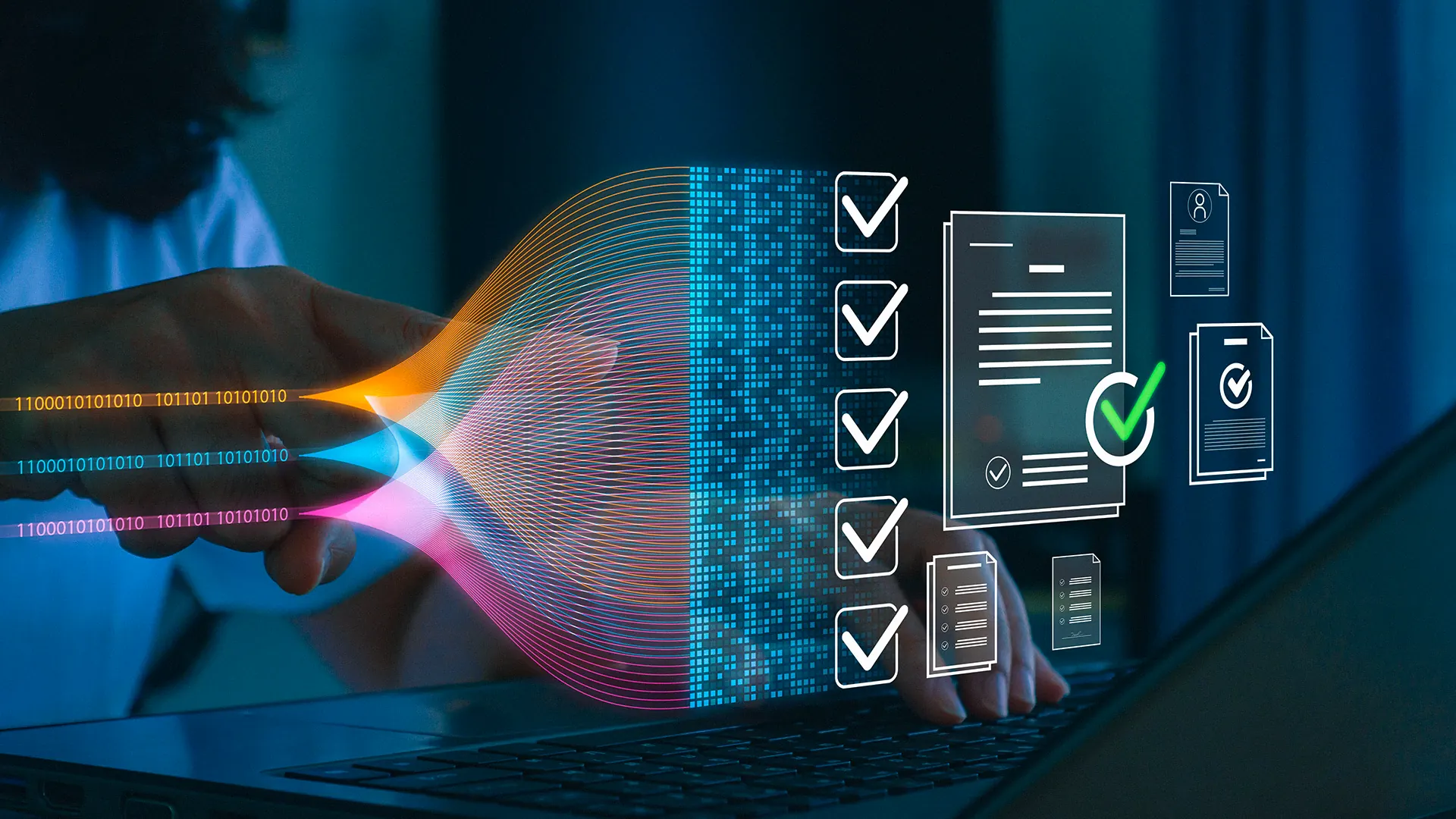

Why This Matters Right Now

Most organizations are not ready for this conversation.

They implemented AI tools quickly. That was the right call. But they did not, in many cases, implement the infrastructure for accountability. They do not have a clear record of what the system did or why. They do not have monitoring. They do not have a clear escalation process.

And now, as AI systems influence more decisions, that gap is becoming a liability.

In regulated industries (financial services, healthcare, government), regulators are starting to ask these questions. The SEC has been asking about AI governance. The FTC is asking about algorithmic transparency. That pressure is starting to cascade into every industry.

Beyond regulation, customers and employees are starting to ask these questions too. If a company made a decision using AI and it affected them (did not get hired, did not get approved for credit, did not see their content), they want to know why. That is not an unreasonable request. It is increasingly a legal requirement.

What Needs to Change

This shift requires three things:

Visibility. You need to know what your AI systems are doing, how often, and what the results are. That means monitoring, logging, and dashboards. It means understanding your data: what is your model seeing? Is it working with the same distribution of inputs it was trained on?

Ownership. Someone needs to own the system. Not "IT" or "data science" in general, but a specific person or team responsible for the system, its performance, and the decisions it makes. That ownership structure needs to be clear to everyone using the system and everyone affected by it.

Process. You need a clear, documented process for what happens when the system fails or produces unexpected results. Do you keep using it while investigating? Do you escalate to a human? Do you shut it off? Under what conditions? Who decides?

None of these things are cheap. They require investment and operational discipline. But they are the difference between "we have an AI system" and "we are accountable for what our AI system does."

This Is Not Anti-AI

This shift is not about pulling back from AI. It is about using AI responsibly.

Accountability is what makes it safe to use AI for more things. If you have visibility into what the system is doing, ownership of the outcomes, and process for addressing failures, you can extend AI into more sensitive decisions with more confidence.

Without it, you are hoping for the best and preparing for the worst.

The organizations that will win in 2026 are not the ones that adopted AI fastest. They are the ones that adopted it responsibly. That means building the accountability infrastructure now, while the stakes are still relatively low.

The shift from "adoption" to "accountability" is not a sign that AI is failing. It is a sign that it is working well enough to matter, and we are finally asking the right questions about what comes next.

Related Articles

Here are a couple related articles to view, or return back to the main page.

Check out the BIZ/DEV podcast

Our weekly tech podcast focusing on AI, our industry, the founder's journey, and more.