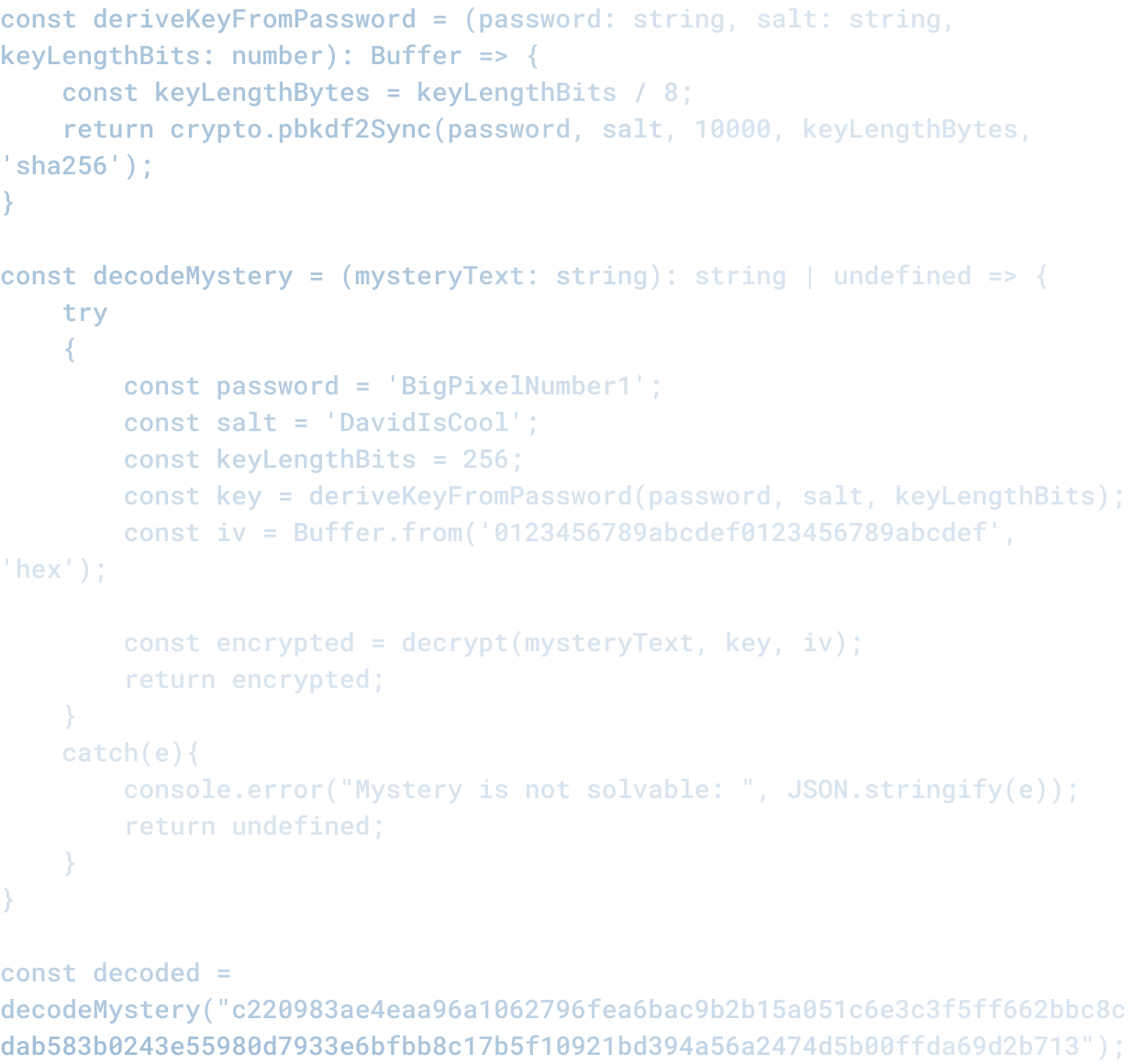

How to Build an AI Stack That Matches Each Job in Your Workflow

How to Build an AI Stack That Matches Each Job in Your Workflow

Most businesses have the same problem with AI: they pick a single tool and try to make it do everything.

This almost never works well.

A single AI tool might be great for customer support, mediocre for sales outreach, and terrible for code generation. But if you have standardized on that tool, you use it for all three because it is already integrated and everyone is already trained on it.

The better approach is to build a stack of tools that match each job in your workflow.

Start with the Jobs, Not the Tools

The first step is to map out the jobs in your workflow. Not tools. Not software. Jobs.

Examples:

- "Generate code scaffolding for new features"

- "Review pull requests for security issues"

- "Write marketing copy for landing pages"

- "Summarize customer support tickets"

- "Triage product bugs"

- "Draft email responses"

Each job has different requirements, different inputs, different success criteria, and different risks.

When you understand what you actually need the AI to do, you can match it to the tool that does it best, rather than trying to force a one-size-fits-all solution.

What Each Job Needs

Different jobs need different capabilities. Some examples:

Customer support triage. You need a tool that can read a variety of message formats, understand context, categorize messages, and pull relevant information into a structured format. You need it to be fast (response time matters), reliable, and able to work with your existing ticketing system.

Code generation. You need a tool with deep knowledge of your stack, able to follow your coding patterns and standards, able to generate code that passes your tests, and able to maintain context across a large codebase.

Marketing copy. You need a tool that understands your brand voice, audience, and market positioning. The output needs to match your tone, be convincing, and create a specific response (clicks, signups, etc.)

Legal review. You need accuracy, ability to cite relevant precedent, understanding of your business context, and auditability.

These are different jobs. They need different tools.

How to Evaluate Tools for Each Job

Once you have mapped the jobs, evaluate tools based on:

Capability. Can the tool actually do what you need? Code generation tools are not all equal. Claude, ChatGPT, Gemini, and others have different strengths. Some are better at certain languages, certain architectures, certain types of tasks.

Integration. Does the tool integrate with the systems you use? If you use Jira, Slack, GitHub, and Notion, the tool needs to plug into those systems, not require manual copy/paste.

Reliability. Is the tool consistent? Does it produce the same quality output every time, or does quality vary dramatically? Does it fail gracefully, or does it fail silently (giving you wrong answers with confidence)?

Cost. What is the cost per unit of work? If the tool saves you 5 minutes per task and costs 50 cents per task, and each task is worth 5 dollars, the math works. If the cost is higher than the value, it does not.

Control. What controls do you have over the tool's behavior? Can you tune outputs? Can you add constraints? Can you make it work specifically for your use case?

Building the Stack

Once you have evaluated tools, the next step is integration. A stack is only useful if the tools talk to each other and to your existing systems.

This means:

- Data flows between tools. Output from tool A becomes input to tool B without manual intervention.

- Your business logic sits on top. The stack runs your workflows, not just isolated tools.

- You have visibility into what is happening. Logs, metrics, and debugging tools matter.

- You can swap tools. If you find a better tool for a specific job, you can replace the old one without rebuilding everything.

The Operational Requirement

Building a stack is not a one-time project. It is an operational discipline. You need:

- Someone responsible for each tool. Who owns evaluation, integration, training, and iteration?

- Regular review of tool performance. Is this tool still the best option for this job? Are there better alternatives? Is it still working?

- Clear criteria for when to swap tools. If a new tool emerges that is better, when would you switch?

- Documentation of how the stack works. Who needs to know how the tools integrate? How do you onboard new team members?

The Real Complexity

The complexity is not in the tools. It is in the operational layer on top of them.

Building a one-tool stack is easy. Pick ChatGPT, give everyone access, and call it done.

Building a multi-tool stack that matches your jobs is harder. It requires understanding what you actually need, evaluating options regularly, managing integrations, and maintaining the discipline to improve the stack over time.

But that complexity is where the value is. A stack built for your specific jobs will outperform a one-size-fits-all tool every time.

Related Articles

Here are a couple related articles to view, or return back to the main page.

Check out the BIZ/DEV podcast

Our weekly tech podcast focusing on AI, our industry, the founder's journey, and more.