The Cost of Over-Automation Isn't What You Think

The Cost of Over-Automation Isn't What You Think

The results look good. Output is up, velocity is real, and the teams that have moved quickly on AI have something to show for it.

That part is true.

Underneath those results is a question the industry has largely agreed to table. Nobody actually knows what this is doing to organizations over time.

We can track output.

We cannot track what happens to how a business thinks when more of its reasoning moves into systems it did not build and cannot fully see, when fewer people stay close to the underlying work, when core capabilities get externalized into tools the organization depends on but does not own.

Those conditions are forming right now, inside organizations that moved fast and are only beginning to understand what they built.

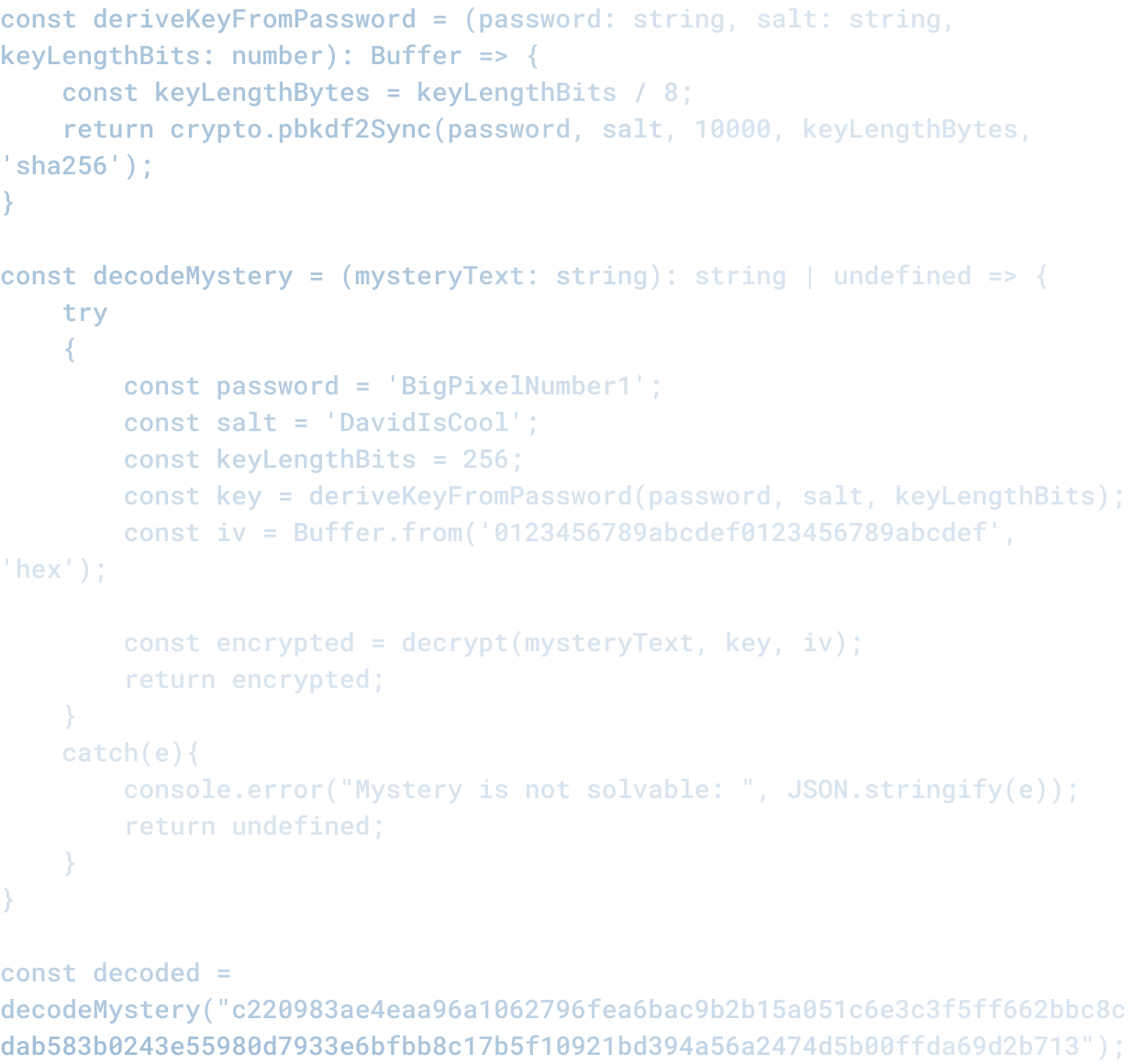

The infrastructure underneath your automation is still being written

Most of today's AI automation runs on top of three companies.

Microsoft has merged its commercial future with OpenAI to the point where the partnership and the product are the same thing.

Google has woven its own models into Workspace and Cloud deeply enough that opting out of the AI layer increasingly means opting out of the tools your teams already use.

Amazon built Bedrock as the abstraction point for model access across AWS, which means if your infrastructure runs on Amazon, you are already inside that dependency.

The practical consequence is that the infrastructure underneath your automation gets redefined by people whose roadmap has nothing to do with your business.

That has already played out in ways teams felt directly:

- Azure OpenAI changed access tiers and pricing in ways that forced teams to rebuild workflows mid-project

- Salesforce shifted its AI bundling in ways that left organizations locked into integration patterns that no longer matched what the platform was selling

Those were platforms doing what platforms do when they have leverage.

It will keep happening.

The teams that got caught were moving fast and building close to the platform layer, which is what moving fast looks like.

The problem is that building close to a layer that is still being defined means your systems absorb every decision that layer makes going forward.

Most teams have not treated that as a design constraint. The platform keeps proving it should be.

The model is no longer the differentiator

A year ago, the gap between the top models was a real consideration. There is less of one now.

Meta's open-weight LLaMA releases made serious model capability available to anyone willing to run their own infrastructure.

Mistral and a growing set of alternatives have closed performance gaps that used to justify expensive proprietary access. Stanford HAI's research on model convergence has tracked this quantitatively.

For most practical use cases, the distance between frontier models has narrowed considerably.

What that means for decisions already made is significant and mostly unacknowledged:

- If an architectural decision was built around GPT-4 being clearly better than the alternatives, that decision looks different today

- If a system is tightly coupled to a specific provider because of capability that no longer differentiates, the dependency remains while the advantage that justified it has eroded

The model becoming interchangeable does not make the system easier to manage. It makes the system the thing that actually matters.

Whether the logic driving a given workflow is separated enough from its execution layer that changing the model underneath it is an operational decision rather than a rebuild. Most teams optimized for the model selection.

The durability lives in the system design.

What accumulates without announcing itself

Gartner, McKinsey, and IBM's Institute for Business Value have each tracked what happens when organizations try to scale AI past early wins.

The same conditions keep surfacing across all three:

- Systems become difficult to trace when something breaks, and the people responsible discover they never had full visibility to begin with

- Data dependencies grow faster than governance can follow, leaving ownership unclear and definitions inconsistent

- Costs shift in ways that stay invisible until a contract renewal forces the accounting

- Teams lose the thread on how decisions are actually being made inside the workflows they built

The system keeps running through all of that.

That is exactly what makes this kind of debt hard to catch.

Here is what it looks like in practice. A growth-stage SaaS company automates its customer success workflows.

Response times improve, the quarterly review looks strong. Six months later there is a churn problem nobody can explain.

The patterns show up in the data but the reasoning behind them does not, because the decisions that shaped those customer interactions never lived anywhere a person could examine.

Nobody can tell you why a specific cohort was handled the way it was. Nobody knows where to start changing it.

That is a design condition, and it is more common than the numbers suggest. At Big Pixel, it is the thing we watch for in every system we build.

Automation that cannot be traced is a liability with a delay on it.

The question the industry has agreed to skip

Saying AI might look unrecognizable in five years gets treated as speculation.

Running complex operations on AI systems and calling them working requires the same kind of assumption, because the longitudinal data to support that conclusion does not exist yet.

The industry has gotten comfortable with one version of the uncertainty and not the other.

Three things remain genuinely unknown, and together they describe one condition rather than three separate concerns.

What sustained AI reliance does to decision quality over time. When a person makes the same kind of consequential decision repeatedly, they develop judgment. The feedback between decision and outcome builds something over time. When a system absorbs that work instead, what the person develops, or stops developing, has not been studied at the scale and duration the question deserves. The answer may be fine. Right now there is no way to know.

How teams change when fewer people stay close to the underlying work. Proximity to a problem is how organizations develop the sensitivity to catch things going wrong. When automation takes over a function, the people who would have built that sensitivity through doing the work do not. Whether that creates a real gap in the organization's ability to course-correct, and how long it takes to show up, remains open.

What happens to institutional knowledge when core work gets externalized. Institutional knowledge lives in people who have done the work long enough to develop judgment about it. Documents capture fragments. The judgment itself, the ability to sense what is going wrong before it becomes a number, belongs to the people close to the work. When that work moves into systems the organization did not fully build, what carries forward and what disappears is still unclear.

Naming these questions is a case for operating with confidence proportional to what is actually known.

The industry has committed at scale to a direction whose long-term effects remain genuinely open. Treating that experiment as settled does not make the results come in faster.

What holds up

The systems that last are built around a specific logic, and it is worth being concrete about what that means rather than staying at the level of principles.

Can the model be replaced without rebuilding how the business operates? Decision logic needs to sit separately from the tools executing it. If swapping a provider requires rethinking how a workflow operates, the system is carrying more fragility than it should.

Does the organization own its own data definitions? Meaning and context need to live inside the organization, held by people who understand what those definitions are for. When they migrate into a vendor's abstraction layer, recovering them when the vendor's structure changes becomes harder than it should be.

Can someone explain why a specific decision was made? Outputs need to be traceable to logic that a person who understands the business can examine and evaluate. Legible to the people responsible for the outcomes, in a way that goes beyond what a technical audit can surface.

Can individual components be replaced without collapsing the workflow? Systems built to evolve are structured so a change in one layer does not travel through everything downstream. Most systems built fast against a moving platform layer break when something underneath them shifts.

We believe that business is built on transparency and trust, and that good software is built the same way.

At Big Pixel, that is the design logic behind how we use AI in our own processes and how we build it into the systems we deliver.

We run thirty to forty percent faster than industry average because the judgment behind the tools is what makes them hold up.

Anyone can adopt a capability. Building something around it that the people responsible for it can actually understand and change over time is the part that takes experience.

The failure in over-automation does not surface in the demo or the first version that works or the quarterly numbers that follow.

It shows up later, in systems that have become difficult to reason about, dependencies that went unmanaged for too long, and decisions nobody inside the organization can fully account for anymore.

The output can look strong through all of that. The operation underneath tells the real story, and it usually does so later than anyone planned for.

Related Articles

Here are a couple related articles to view, or return back to the main page.

Check out the BIZ/DEV podcast

Our weekly tech podcast focusing on AI, our industry, the founder's journey, and more.