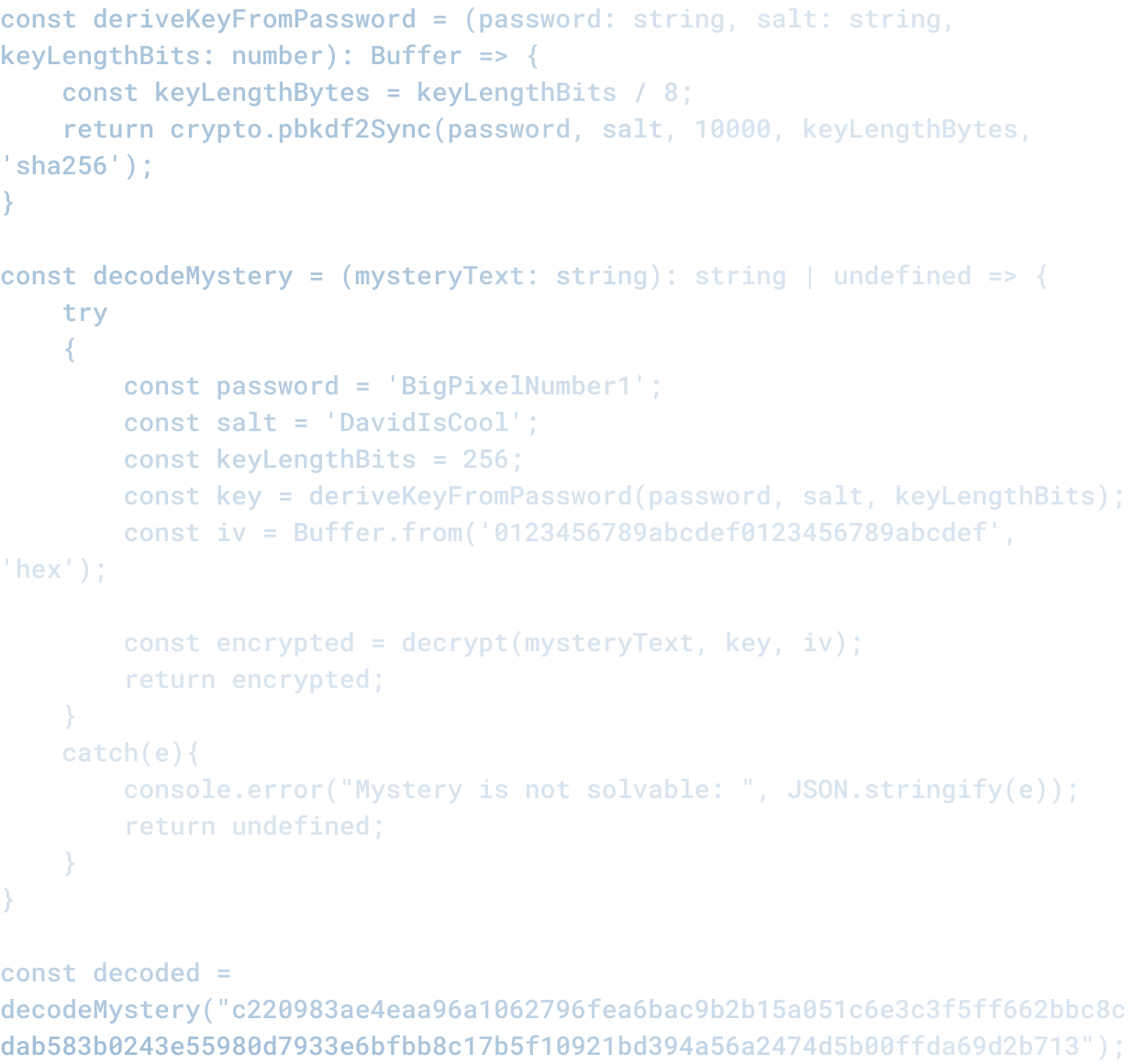

The Current State of Agentic AI in 2026

The Current State of Agentic AI in 2026

Agentic AI has been the marquee technology story since OpenAI announced agents, Google launched Gemini AI with agent-like capabilities, and Anthropic released Claude with computer use. Everyone is asking: what can AI agents actually do?

The accurate answer is: less than you think, and more than most are building.

What Agentic AI Actually Is

An agentic AI system is one that can perceive its environment (read files, understand screens, interpret data), reason about what actions to take (plan, sequence, decide), and take actions in that environment (run code, click buttons, write to databases) without human direction at each step.

That is different from a chatbot that waits for every prompt. An agentic system reads your question, decides what it needs to do, does those things, and returns an answer. If it encounters a problem, it can adjust course.

Where Agents Are Actually Useful Right Now

The current sweet spot for agentic AI is in narrowly scoped, high-friction tasks where the agent has deep context and clear success criteria.

Code review and testing: An agentic system can read a code change, understand the codebase, run tests, identify problems, and suggest fixes. GitHub Copilot and similar tools are doing this now at scale. It is working because the scope is narrow (this specific code change), the success criteria are clear (does it pass tests, does it match style guides), and the cost of being wrong is manageable (code review is not the final decision point).

Infrastructure management: An agentic system can see what is running, understand what should be running, notice discrepancies, and make changes to fix them. This is useful because the environment is clearly defined, the state is knowable, and automated action reduces manual toil significantly.

Data organization and processing: Reading files, moving data between systems, transforming data into a standard format: these are mechanical tasks that agents handle well. The friction is high (manual work is slow) and the decision-making is clear (follow these rules).

Where Agents Are Overhyped Right Now

The places where agents are getting overstated are interesting.

Replacing customer support staff: The theory is that an AI agent can answer customer questions, resolve issues, and reduce the load on human support teams. The practice has been messier. Agents work well on common, straightforward problems. They struggle when context is ambiguous, when the issue requires judgment, or when customer expectations matter. Most support tickets end up needing a human anyway. The real use case is using agents to handle the easy 20% of tickets so humans can focus on the hard 80%. That is different from agents replacing the team.

Building products from specifications: The theory is that an AI agent can read a spec, write code, test it, and deliver a working product. The practice has shown us repeatedly that specs are usually incomplete, often internally inconsistent, and sometimes describe something that does not actually solve the underlying problem. An agent that builds exactly what the spec says might build exactly the wrong thing. This is not because agents are bad. It is because specifying a complex product is hard.

Making business decisions: The theory is that an agent with access to company data can make decisions: hiring, investment allocation, contract terms, etc. The practice is that these decisions require judgment and context that AI systems do not actually have. An agent can surface relevant information and highlight tradeoffs, but the decision itself still needs a human.

Why The Mismatch Exists

There are three reasons the hype exceeds the reality:

Scope creep: It is easy to see an agent do something useful and imagine it doing a bigger version of that thing. An agent can refactor code, so it must be able to build a codebase. An agent can fix a server config, so it must be able to manage infrastructure. But agents do not scale linearly. Complexity is not just more of the same problem.

Responsibility avoidance: There is something appealing about the idea that an agent can "take responsibility" for a decision. But an AI system cannot actually be responsible. It does not care about outcomes, cannot be held legally accountable, and cannot learn from failures in the way a person does. When something goes wrong, the person who deployed the agent is responsible. That accountability cannot be outsourced.

Underestimating judgment: This is the biggest one. Most of the work in most jobs is not mechanics (execute this task) but judgment (what task should be executed, how should it be modified if conditions change, what does the outcome actually mean). Agents are useful at mechanics. They struggle at judgment. That distinction is not obvious when you are first experimenting with the technology.

What Is Actually Happening in 2026

The organizations getting real value from agentic AI right now are doing a few things:

They are using agents to extend human capacity, not replace humans. An agent handles routine work so humans can do judgment work. That is different from "automation will replace this job."

They are keeping agents on a tight leash. Access is scoped. Decisions are logged and reviewable. There is an escalation process when something goes wrong. This is more work than "let it run loose," but it is also more safe and more sustainable.

They are measuring outcome, not activity. They do not just measure "how much did the agent do" but "did the outcome improve." An agent that processes 1000 items but creates 100 downstream problems is not useful. An agent that handles 100 items and saves 20 hours of careful review is.

What Comes Next

As agentic AI matures, we will see better integration with human workflows rather than replacement of them. The interesting product innovation is not "AI agent replaces human job" but "AI agent and human together do better work than either alone."

That requires rethinking how we structure work. It means being clear about what we want the agent to do (this specific category of decision), what inputs it should see (these data sources, not those), and what outcomes we actually care about (speed, accuracy, cost, or something else). It means building process around the agent: how do we monitor it, how do we adjust it, what do we do when it fails.

The agents that will succeed are not the ones that pretend to be fully autonomous. They are the ones that are clearly tools in service of human judgment, not replacements for it.

Related Articles

Here are a couple related articles to view, or return back to the main page.

Check out the BIZ/DEV podcast

Our weekly tech podcast focusing on AI, our industry, the founder's journey, and more.