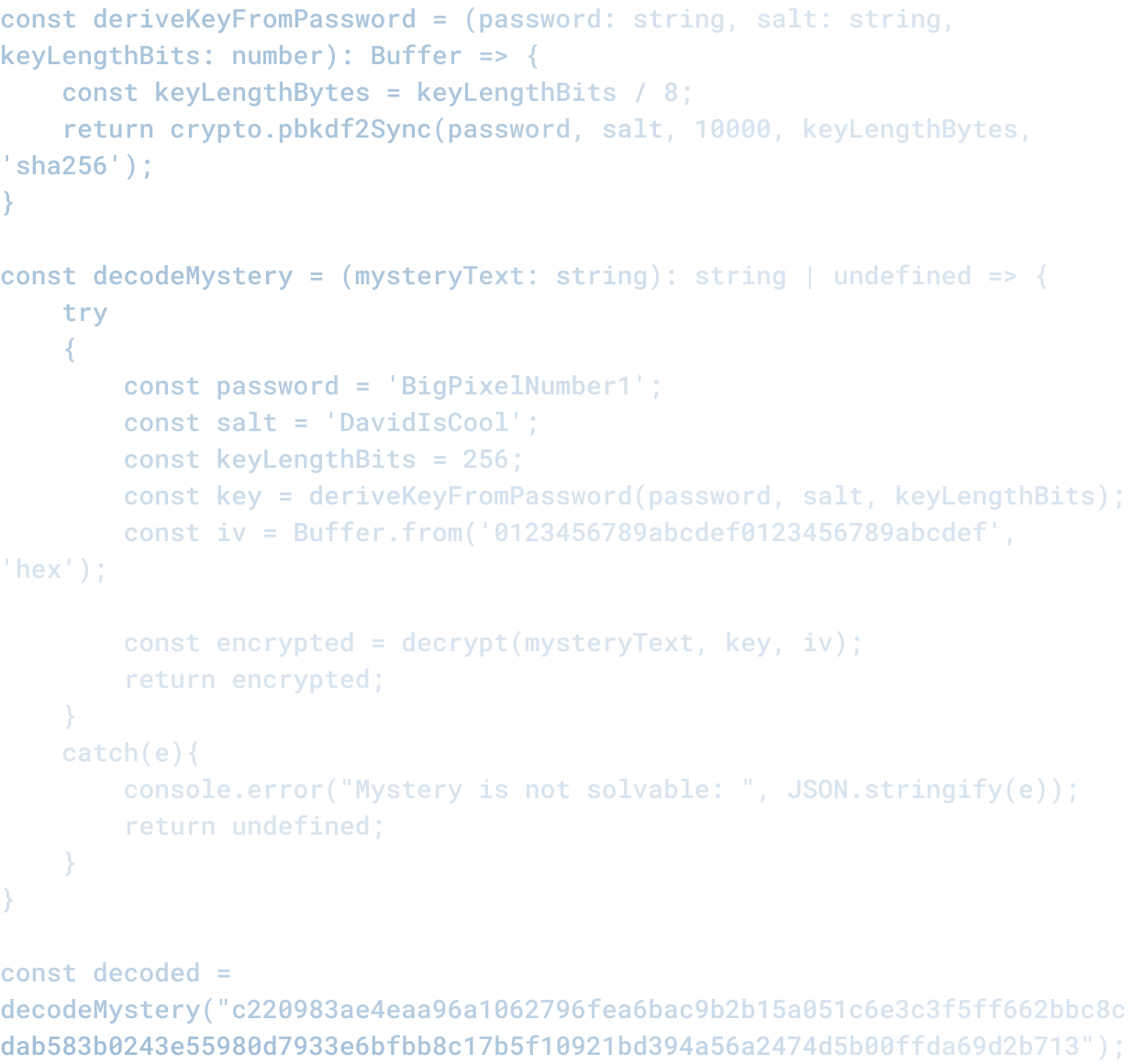

What AI-Assisted Development Gets Wrong

What AI-Assisted Development Gets Wrong

Every few weeks, someone publishes a demo showing AI writing code from a prompt and concludes that software development will fundamentally change.

The demos are genuinely impressive. An AI understands a vague specification and generates a working application. You ship it.

The real process is usually different.

What The Demo Misses

In the demo, the engineer writes a prompt, and code emerges. It is elegant. It works. It is done.

In reality:

You write a prompt. The AI generates code. You review it and realize it does not do what you actually needed. You adjust the prompt. The AI generates different code. You test it and realize it does not handle a case you care about. You ask it to handle that case. It does, but now it is slow. You ask it to optimize. It does, but introduces a bug. And so on.

This is not a design flaw in the AI. It is an insight into what code is actually for.

The Specification Problem

When you write a prompt, you are writing a specification.

Specifications are usually incomplete. They describe the happy path but not the edge cases. They describe what you think you want, not what you actually need. They miss constraints that matter in production.

"Build me a form that collects customer information and stores it." Sounds simple. But: Do you validate email? Do you require a phone number? What do you do if someone does not have a street address? Do you allow international addresses? What does the API return if the request fails? How long do you wait for a response?

Every one of those is a decision. A specification that does not make those decisions explicit is incomplete.

AI can generate code from an incomplete specification. What it cannot do is know which decisions are right. That requires understanding the business context, the constraints, and the user needs.

The Edge Case Problem

Demo code usually works great for the case it was designed for.

Real systems need to handle hundreds of edge cases. What happens when the network is slow? What happens when a user provides data in an unexpected format? What happens when you scale from 100 users to 1000? What happens when the third-party service you depend on is down?

These are not problems with the code. These are constraints on the system. Handling them means making decisions about how the system should behave under adverse conditions.

An AI can help implement those decisions once they are made. It cannot make the decisions. That is not a limitation of the AI. That is a fundamental property of software design.

The Architecture Problem

The prompt usually describes a feature or a small application. What it usually does not describe is how that feature fits into the larger system.

Writing code in isolation is easier than writing code that works as part of a larger system. A function that works great in a test does not work great if it is called a million times a day. A service that works great in development does not work great if it is part of a distributed system where services can fail independently.

Integrating with a larger system means understanding the architecture, the constraints, the failure modes. You cannot do that from a prompt.

The Maintenance Problem

Code is read far more often than it is written. A piece of code that was written in 5 minutes might be read and modified by your team for the next 5 years.

That means that the code needs to be understandable. It needs to be consistent with the rest of the codebase. It needs to have tests that make the behavior explicit. It needs to have comments explaining the why, not just the what.

AI can generate code. It is usually not code that is ready to be part of a codebase. It is code that is a starting point for a conversation with your team about how this should actually be done.

What AI-Assisted Development Actually Does Well

This is not to say that AI is not valuable for development. It is extremely valuable. Just not in the way that the demos suggest.

AI is good at:

Boilerplate and scaffolding. Generate the structure of a project, the basic files, the standard patterns. The developer refines from there.

Documentation and test writing. An AI can generate tests from code, generate documentation from code, or take a test and suggest code that implements it. These are mechanics that AIs handle well.

Incremental improvement. Given a piece of code, an AI can suggest optimizations, refactorings, fixes. It is good at the "now that I understand the context, what should I change" part.

Exploration and ideation. An AI can generate options. Multiple approaches to a problem. Some will be good, some will be bad. The human evaluates them and picks the best.

What it is bad at:

Deciding what to build. Should this be a cache or a queue or a database? Should we optimize for speed or for maintainability? Should this be a library or a service? These are decisions. AIs can help you think through them, but they cannot make them.

Integration and systems thinking. Making code fit into a larger system, understanding failure modes, designing for scale. These require knowledge of the context that is not in the prompt.

Judgment about correctness. Is this solution actually solving the problem? Or is it a technically correct solution to the wrong problem? That is a human judgment call.

How This Actually Works

At Big Pixel, we use AI to write code. But the model is different from what the demos suggest.

A human engineer decides what needs to be built. AI generates options or scaffolding. The engineer reviews it and makes decisions. The engineer integrates it with the larger system. The engineer writes tests and documentation. The engineer gets feedback and iterates.

AI is a tool in that process. It makes the engineer faster at certain steps. It does not replace the engineer's judgment about what the system should do or how it should work.

The mistake that a lot of organizations are making is treating AI-generated code as finished code, or treating a prompt as a complete specification. Neither of those things is true.

Code is not done until it is tested, integrated, documented, and understood by the people who will maintain it. A specification is not complete until it has accounted for edge cases, constraints, and the integration points with the rest of the system.

AI can speed up the writing part. Everything else is still human work.

Related Articles

Here are a couple related articles to view, or return back to the main page.

Check out the BIZ/DEV podcast

Our weekly tech podcast focusing on AI, our industry, the founder's journey, and more.