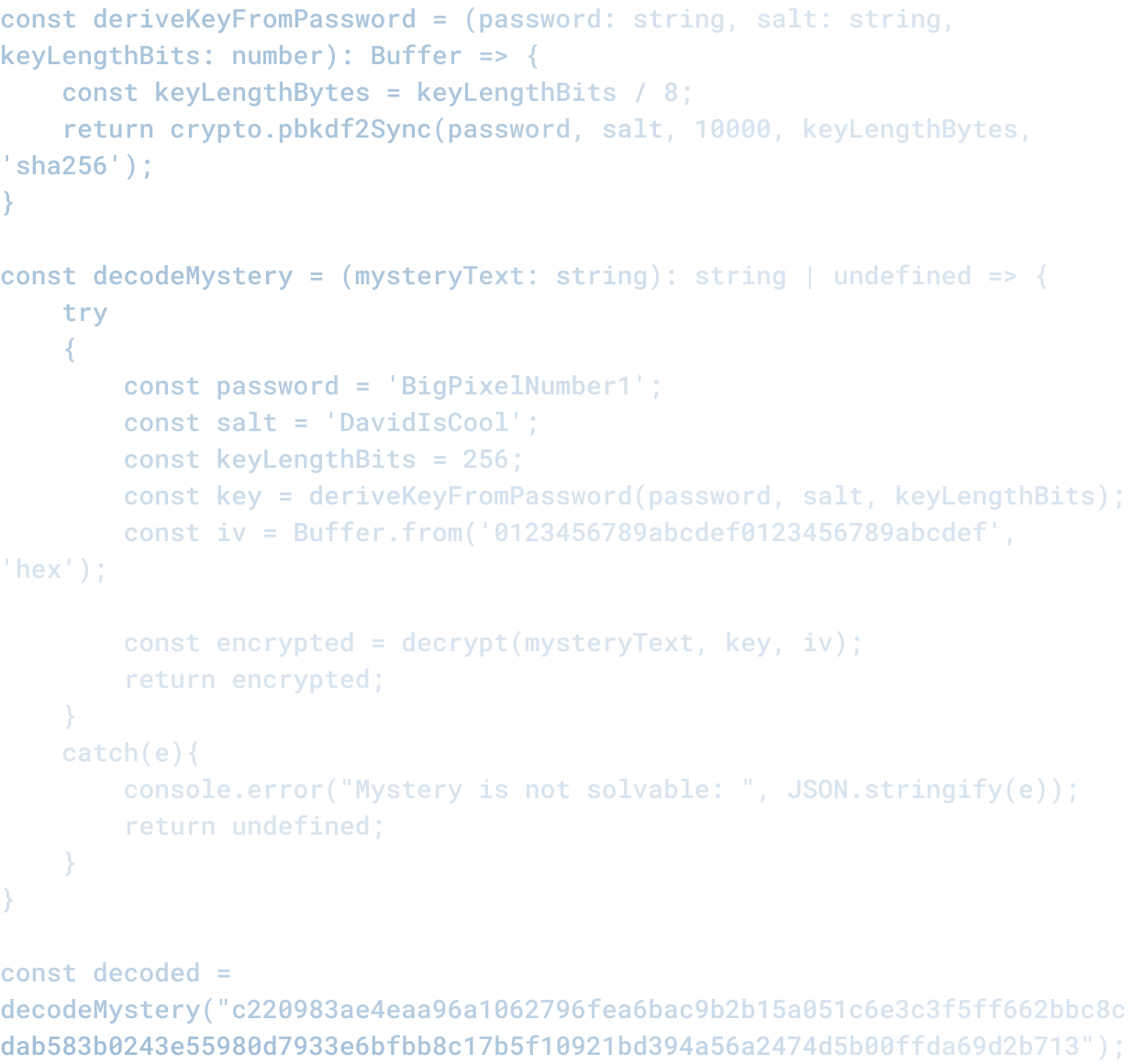

What Most Teams Skip Before Adopting AI

What Most Teams Skip Before Adopting AI

Most teams think the hard part of AI adoption is choosing a tool and getting it working.

That is not the hard part.

The hard part is figuring out what problems you actually have and which ones AI can solve.

The Default Playbook

The standard playbook is:

1. Leadership reads about a competitor or a vendor using AI

2. Leadership decides "we need AI"

3. A team is tasked with implementing it

4. The team picks a tool (ChatGPT, Claude, etc.)

5. Some people try it and it seems useful

6. Rollout happens

7. In 3-6 months, people are still using it but nobody has formally measured whether it actually helped

This is adoption theater. You have adopted AI. That does not mean it is solving anything.

What Actually Needs to Happen First

Before you pick a tool, you need to answer these questions:

What is the actual problem? Not "we need AI" but "what is slow, expensive, or broken that AI might help with." Is it that writing takes too long? That code has too many bugs? That decision-making is inconsistent? That information is scattered across multiple systems?

How do you know it is a problem? Can you measure it? If you say "writing is slow," do you have data on how slow? How long does it actually take? How many hours per week does your team spend on it? Without measurement, you cannot know whether it is actually a problem worth solving.

What would solving it look like? If you fixed this problem, what would change? How would you know? If you say "AI will make us faster," faster at what? How much faster? What is the business outcome? If the answer is "I do not know," you are not ready to implement AI.

Is AI actually the right tool? Not all problems are AI problems. Some problems are process problems (you are doing things in a stupid order). Some are organizational problems (the right information is not in the right place). Some are skill problems (people do not know how to do the work). An AI tool will not fix any of those. It will make them more expensive by adding another layer of complexity.

The Assessment Phase

If you can answer those questions, the next step is an assessment phase.

This means:

Pick one small team or one workflow. Measure the baseline. How long does the work take now? What does quality look like? What are the pain points? You need a clear picture of the before state.

Implement the AI tool in that one place. Run it for a defined period (4-6 weeks). Let people adjust. Let them figure out how to actually use it.

Measure the impact. Did it get faster? Did quality improve? Did people like it? What was harder? What unexpected things happened?

Decide. Did it work? If yes, what would it take to roll out to other parts of the organization? If no, what would need to change for it to work?

This is boring. It is not sexy. It does not generate headlines. It is also the only way to know whether AI is actually helping.

What Goes Wrong When You Skip This

Most teams skip this phase. They are excited about the technology. Leadership wants results. There is pressure to move fast.

What happens:

People use the tool but do not integrate it into their workflows properly. They use it sporadically. The tool seems to help but you cannot tell if you would have gotten the same result a different way.

You measure adoption ("how many people are using this") instead of impact ("did this improve the outcome"). Adoption is easy to measure and feel good about. Impact is harder and might be disappointing.

You build dependencies on the tool before you understand what it is actually good for. Now you have to keep using it even if it does not work.

You overpay or underpay because you do not know how much value you are getting.

You lose credibility with the team because you implement something that does not actually help, and people resent the time investment.

How to Know You are Ready

You are ready to adopt an AI tool when:

You have measured the current state and identified a specific problem you are trying to solve.

You have assessed the tool on a small scale and have data showing whether it actually helps with that problem.

You have defined what success looks like (metrics, timelines, rollout plan).

You have buy-in from the team that will be using it (not just from leadership).

You have a plan for what to do if it does not work as well as hoped.

Until you can check those boxes, you are not ready. You are just trying to adopt technology for the sake of adopting technology.

The Long View

The teams that will succeed with AI are not the fastest ones. They are the thoughtful ones.

They took time to understand their problems. They did small pilots before big rollouts. They measured impact instead of just activity. They adjusted when something did not work.

It is slower. It is less exciting. It is also the only way to actually build value.

Related Articles

Here are a couple related articles to view, or return back to the main page.

Check out the BIZ/DEV podcast

Our weekly tech podcast focusing on AI, our industry, the founder's journey, and more.