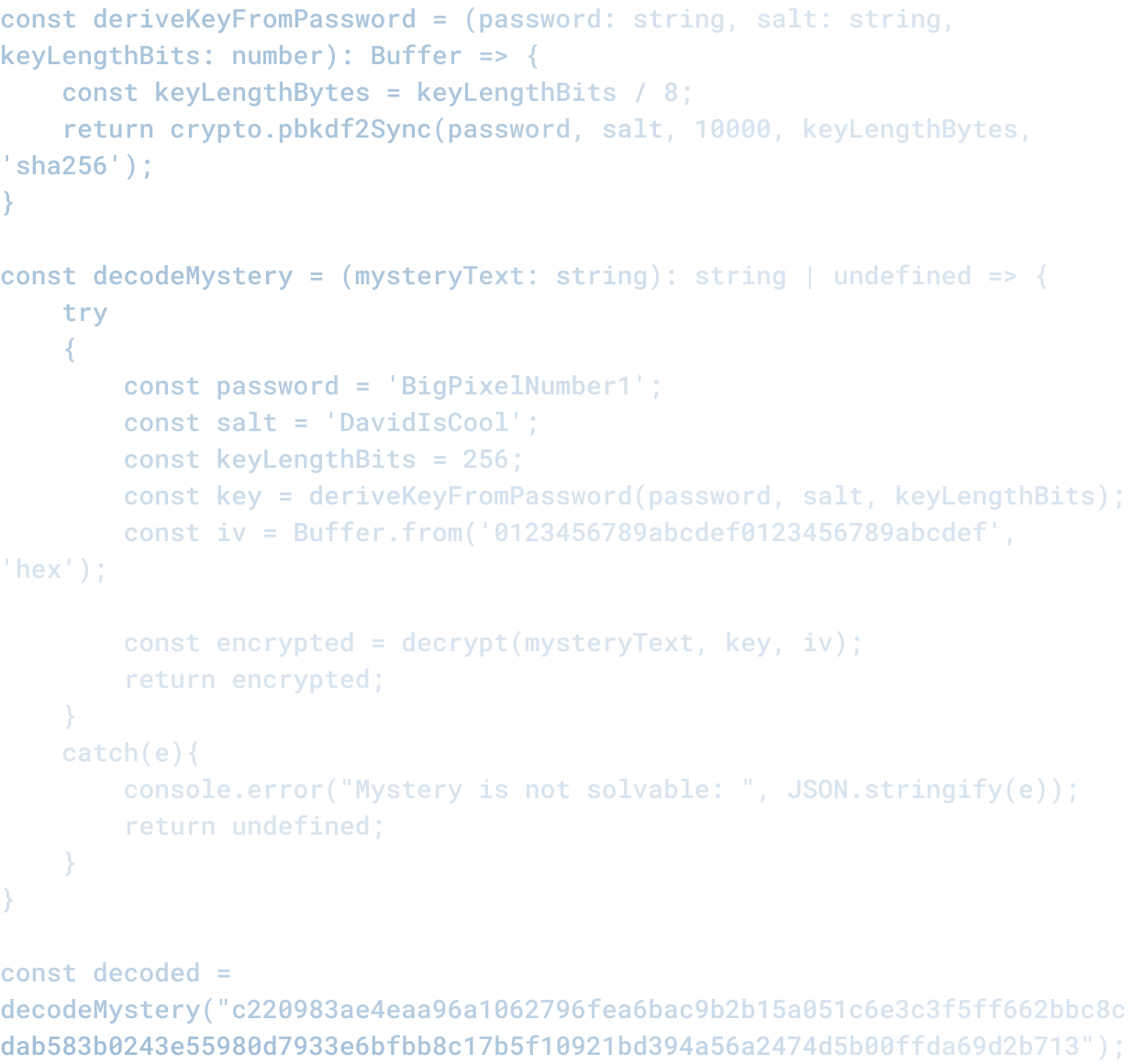

When AI Is Part of Delivery, Secure-by-Default Isn’t Optional

When AI is Part of Delivery, Secure by Default is not Optional

Autonomous AI systems that can take action in your business infrastructure represent a new security risk.

Not because AI is inherently insecure. But because autonomous systems expand the attack surface and compress the time to impact.

If an AI system is compromised, it does not just exfiltrate data. It takes actions: moves money, changes configurations, modifies records, executes commands. And it does these things continuously until someone notices.

That is different from an attacker getting access to a database. The attacker gets the data, and you eventually notice and revoke the access. If an AI system is compromised, the attack persists in real time, amplified by the system's own capabilities.

What Changes When AI is Autonomous

When a human uses your system, they can only do what a human can do. If they want to move 100 million dollars, they manually set up 100 million separate transactions. That is conspicuous.

When an AI system uses your system, it can do things at machine speed and scale. An AI system can move 100 million dollars in the time it takes to read a prompt. It can modify a million records in seconds. It can disable your security systems in minutes.

This is not a threat. It is a feature. It is also a liability if the system is compromised.

The Threat Model

When you add an AI system to your infrastructure, the threat model expands:

Prompt injection attacks. An attacker puts malicious instructions in the data that the AI system reads. The AI system follows the instructions. If the AI system has access to your infrastructure, the instructions get executed at scale. A comment in a document, a title in an email, a customer name in a form: any of these could be an attack vector.

Model poisoning. An attacker modifies the training data or the model itself to cause it to behave differently. A poisoned model might pass all your tests and then do something completely different in production.

Compromised credentials. An AI system typically has credentials (API keys, database credentials, etc.) that it uses to take action. If an attacker gets those credentials, they have the same access as the system. If the system has broad access (which many do), the attacker has broad access too.

Scaling of human errors. A human makes a mistake: they delete the wrong record, run the wrong command. The mistake affects one record. An AI system makes the same mistake: it deletes a million records, runs a command a million times. The human error becomes a disaster.

How Secure by Default Works

Secure by default means:

Narrow permissions. The AI system has access only to the specific data and actions it needs. Not "read everything and write everything." Specific. Limited. Revocable.

Explicit approval for high-impact actions. When the AI system wants to do something that could significantly impact your business (delete, modify, move money), it gets approval. From a human. Before it acts.

Audit trails. Everything the AI system does is logged. Who asked it to do what, what did it do, what was the result. So that when something goes wrong, you can trace it.

Kill switches. You can revoke access to the AI system, disable it, or roll back its actions. Quickly. Without having to track down where it has tentacles into your infrastructure.

Monitoring. You know when the system is behaving abnormally. When it tries to access something it should not, when it does something at unusual scale, when it does something outside its normal pattern. You know, and you can respond.

What This Looks Like In Practice

A system with these properties is not frictionless. An AI system that needs approval for every action is slower. A system with narrow permissions might do less than you want. Audit trails add overhead. Kill switches might not exist and you have to build them.

That is the point. Security has a cost. The cost is friction. The benefit is that when something goes wrong, the impact is limited.

This is the tradeoff every organization using autonomous AI systems needs to make. How much friction are you willing to accept to keep the blast radius small?

Why This Matters Now

Most organizations are building AI systems fast, without the security infrastructure. They are doing it because the technology is new and the pressure to move is high.

That is fine as long as the AI system is not doing anything critical. But as AI systems move into production and start making real decisions or taking real actions, the lack of security infrastructure becomes a liability.

Once the system is in production, retrofitting security is hard. It means redesigning the system, rearchitecting permissions, building audit trails retroactively. It is much easier to build it in from the start.

At Big Pixel, we have seen multiple cases where an organization built an autonomous AI system fast and then had to rebuild it when they realized the security implications.

They could have avoided that by baking in security from the start. Not perfect security. Not frictionless systems. Just systems where the blast radius is limited and the audit trail is clear.

The Standard to Expect

As regulation catches up (which it will), the standard will probably be:

If an AI system can take action on your behalf, you need explicit controls on what it can do, approval before it does certain things, audit trails of what it did, and ability to revoke or roll back.

That is not a guess. That is what compliance frameworks are already starting to require. Organizations that wait for regulation to mandate this will be playing catch-up. Organizations that build it now will have an advantage.

Related Articles

Here are a couple related articles to view, or return back to the main page.

Check out the BIZ/DEV podcast

Our weekly tech podcast focusing on AI, our industry, the founder's journey, and more.