The Big Pixel Approach to AI Integration: Workflow First, Features Second

The Big Pixel Approach to AI Integration: Workflow First, Features Second

Somebody on your board asked about your AI roadmap last quarter, or your investors did, or a competitor announced something and the silence on your end started to feel expensive.

The answer that came back from your team was reasonable. Yes, we can integrate AI. Yes, we can have something in production by Q3.

The path of least resistance is to add an AI feature to the product you already have, and that path looks productive even when it is the wrong one.

The reason it is the wrong one rarely shows up in the planning meeting. It shows up six months later, when the feature is live and the adoption numbers have not moved.

What Workflow First Actually Means

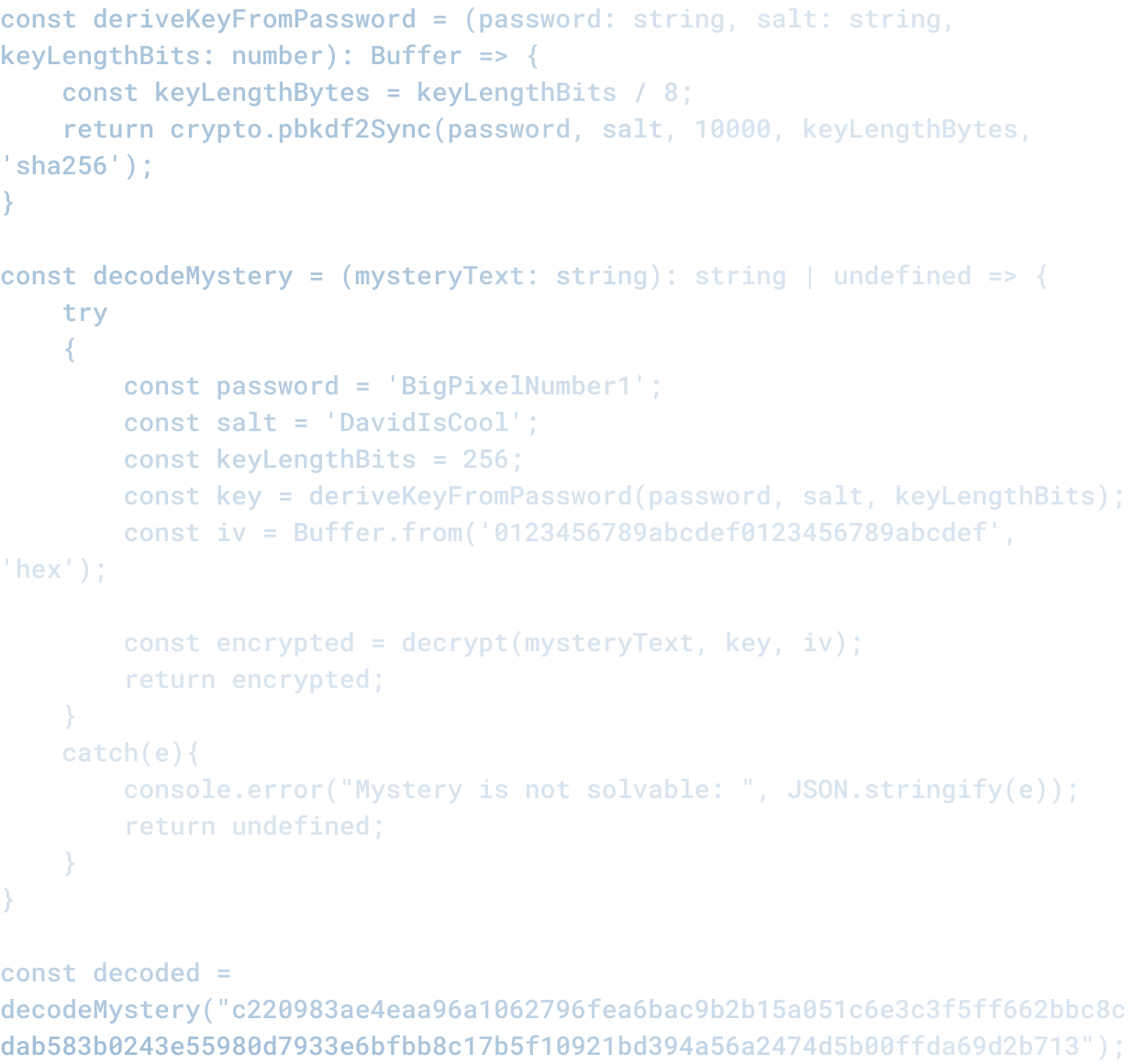

The question that determines whether an AI integration produces value or noise is not which model to use. It is where in the work AI actually belongs.

Most of the AI integrations being announced right now answer the first question and skip the second. They get built fast and feel productive while they are being built.

The problem they create is that they sit on top of an existing workflow without changing how the work happens, which means the user has to choose between using the new AI feature and using the parts of the product that already worked.

Most users choose the parts that already worked.

A workflow first integration starts somewhere different. The first conversation is about the work itself. We ask:

- Where decisions get made inside the product

- Which of those decisions cost the user time or accuracy

- Where a model trained on the right data could make those decisions easier instead of adding a new place the user has to look

The answers determine whether AI belongs anywhere in the build at all, and if it does, where exactly. By the time we get to the question of which model, the architecture is already mostly designed.

Where Feature First Integration Fails

The Air Canada chatbot case is the cleanest example of what feature first looks like when it goes wrong. Air Canada deployed an AI chatbot into a customer service context, and the chatbot gave a passenger incorrect information about bereavement fares.

When the passenger sued, Air Canada argued in court that the chatbot was a separate entity and the airline therefore had no responsibility for what it said. The tribunal rejected that argument and held the airline liable.

The chatbot did what it was built to do.

The failure was in the integration decision that put it there in the first place.

Somebody put an AI system into a workflow where users were making real decisions during emotionally charged moments, and the architecture did not account for what would happen when the AI was wrong.

The cost of that decision cascaded:

- First on a grieving customer who relied on what they were told

- Then on the airline in court

- Then on every other airline that watched the case and realized their own AI deployments had similar exposure

That is what feature first looks like at scale.

The chatbot was added because chatbots can be added, and the architecture decision about where AI belonged inside the customer experience was never made.

What Workflow First Looks Like in Practice

Witherspoon Rose Culture is a wholesale rose grower that came to us needing software no off-the-shelf platform could deliver.

Their operation had a specific texture, and the work spanned multiple departments with handoffs that mattered:

- Orders and customer account management

- Inventory and spray mix tracking

- Automated end-of-month financial processes and tax reporting

- Mobile access for field staff working in the rose fields

- E-commerce sync with Shopify

Before AI was on the table, we built Quickrose, a fully custom operations platform on a React frontend with a Laravel API and Azure-hosted infrastructure.

The architecture mapped to how the business actually runs.

When AI integration came into the conversation, the question was where it could add something the existing workflow could not deliver on its own.

The answer was reporting. Synthesizing patterns across the operations data was the kind of work a model could meaningfully amplify, and it sat at a moment where the team was already making decisions about growing cycles, inventory, and customer relationships.

That is where AI lives in the build.

What is worth naming is where AI was not used. There were several places in Quickrose that looked like obvious candidates for AI features, and we said no:

- The order entry flow needed deterministic accuracy, not synthesis

- The mobile field app needed speed and clarity for staff working in the field

- The end-of-month financial automation produces outputs regulators expect to be deterministic, not generated

Witherspoon now runs their full growing operation on a single, purpose-built platform with the AI living exactly where it earns its place.

Why This Decision Has to Be Made Before the Build

The window for getting AI architecture right is the period before development starts. After that, the cost of changing direction climbs steeply:

- The system is already in production

- Any change has to unwind decisions other parts of the product depend on

- The original team is often gone or assigned to other work

We have watched several engagements over the past year where a client came to us with an AI feature already in production that was not working, and the conversation was always harder than it would have been at the architecture stage.

The shape of the conversation is also different at that point:

- At the architecture stage, the question is what to build

- Post launch, the question is how to fix what is already there without breaking what users are already using

The tradeoffs are worse and the options are narrower.

The version of the AI integration that gets to live is rarely the one anybody would have designed if they were starting fresh.

How Big Pixel Runs This Conversation

The structural place where this decision gets made is the free Exploratory session that opens every project we take on.

The session runs two to four hours, the conversation is mapped to the actual workflow of the business, and we ask the questions that the path of least resistance would skip:

- Where in this product do users make decisions that AI could affect?

- What happens to those users if the AI is wrong?

- Which decisions inside the workflow are worth amplifying with a model and which are worth leaving alone?

- What does the cost model look like at real usage rather than demo usage?

- Which features are worth building because they will hold up under real conditions, and which look attractive in the meeting but would erode trust later?

By the end of the session, the client either has a fixed-fee estimate that reflects an architecture we are confident in, or they have several hours of free strategic thinking to take back to their team.

We have walked plenty of clients out of AI features they came in asking for, and we have built features they did not initially know they needed.

Both outcomes are products of the same conversation, which is the conversation that has to happen before the build.

We host and maintain what we build, which means we are accountable for what comes after launch in a way that firms operating on a build-and-walk model are not.

We believe that business is built on transparency and trust, and that good software is built the same way.

AI integration decided before the workflow is mapped almost never honors that standard, regardless of how impressive the technology behind it is.

The pressure to add AI to your product is real and it is not going away. Boards keep asking, and competitor announcements will keep landing in your inbox.

The pressure itself is something you cannot eliminate. What you can do is find a partner who is willing to make the architecture conversation harder than it would otherwise be. The firm worth working with is the one that will tell you where AI does not belong inside your product, and why, before any of it gets built.

Related Articles

Here are a couple related articles to view, or return back to the main page.

Check out the BIZ/DEV podcast

Our weekly tech podcast focusing on AI, our industry, the founder's journey, and more.